As a fervent AI enthusiast, I disagree.

…I’d say it’s 97% hype and marketing.

It’s crazy how much fud is flying around, and legitimately buries good open research. It’s also crazy what these giant corporations are explicitly saying what they’re going to do, and that anyone buys it. TSMC’s allegedly calling Sam Altman a ‘podcast bro’ is spot on, and I’d add “manipulative vampire” to that.

Talk to any long-time resident of localllama and similar “local” AI communities who actually dig into this stuff, and you’ll find immense skepticism, not the crypto-like AI bros like you find on linkedin, twitter and such and blot everything out.

For real. Being a software engineer with basic knowledge in ML, I’m just sick of companies from every industry being so desperate to cling onto the hype train they’re willing to label anything with AI, even if it has little or nothing to do with it, just to boost their stock value. I would be so uncomfortable being an employee having to do this.

The saddest part is, this is going to cause yet another AI winter. The first few ones were caused by genuine over-enthusiasm but this one is purely fuelled by greed.

Agreed that’s why it’s so dangerous. These tech bros are going to do damage with their shitty products. It seems like it’s Altman’s goal, honestly.

I think we should indict Sam Altman on two sets of charges:

-

A set of securities fraud charges.

-

8 billion counts of criminal reckless endangerment.

He’s out on podcasts constantly saying the OpenAI is near superintelligent AGI and that there’s a good chance that they won’t be able to control it, and that human survival is at risk. How is gambling with human extinction not a massive act of planetary-scale criminal reckless endangerment?

So either he is putting the entire planet at risk, or he is lying through his teeth about how far along OpenAI is. If he’s telling the truth, he’s endangering us all. If he’s lying, then he’s committing securities fraud in an attempt to defraud shareholders. Either way, he should be in prison. I say we indict him for both simultaneously and let the courts sort it out.

-

TSMC are probably making more money than anyone in this goldrush by selling the shovels and picks, so if that’s their opinion, I feel people should listen…

There’s little in the AI business plan other than hurling money at it and hoping job losses ensue.

After getting my head around the basics of the way LLMs work I thought “people rely on this for information?”, the model seems ok for tasks like summarisation though

I don’t love it for summarization. If I read a summary, my takeaway may be inaccurate.

Brainstorming is incredible. And revision suggestions. And drafting tedious responses, reformatting, parsing.

In all cases, nothing gets attributed to me unless I read every word and am in a position to verify the output. And I internalize nothing directly, besides philosophy or something. Sure can be an amazing starting point especially compared to a blank page.

the model seems ok for tasks like summarisation though

That and retrieval and the business use cases so far, but even then only if the results can be wrong somewhat frequently.

Ya, it’s like machine learning but better. That’s about it IMO.

Edit: As I have to spell it out: as opposed to (machine learning with) neural networks.

I mean… it is machine learning.

It’s also neural networks, and probably some other CS structures.

AI is a category, and even specific implementations tend to use multiple techniques.

Well there is a very specific architecture “rut” the LLMs people use have fallen into, and even small attempts to break out (like with Jamba) don’t seem to get much interest, unfortunately.

Sure, but LLMs aren’t the only AI being used, nor will they eliminate the other forms of AI. As people see issues with the big LLMs, development focus will change to adopt other approaches.

There is real risk that the hype cycle around LLMs will smother other research in the cradle when the bubble pops.

The hyperscalers are dumping tens of billions of dollars into infrastructure investment every single quarter right now on the promise of LLMs. If LLMs don’t turn into something with a tangible ROI, the term AI will become every bit as radioactive to investors in the future as it is lucrative right now.

Viable paths of research will become much harder to fund if investors get burned because the business model they’re funding right now doesn’t solidify beyond “trust us bro.”

TSMC’s allegedly calling Sam Altman a ‘podcast bro’ is spot on, and I’d add “manipulative vampire” to that.

What’s the source for that? It sounds hilarious

When Mr. Altman visited TSMC’s headquarters in Taiwan shortly after he started his fund-raising effort, he told its executives that it would take $7 trillion and many years to build 36 semiconductor plants and additional data centers to fulfill his vision, two people briefed on the conversation said. It was his first visit to one of the multibillion-dollar plants.

TSMC’s executives found the idea so absurd that they took to calling Mr. Altman a “podcasting bro,” one of these people said. Adding just a few more chip-making plants, much less 36, was incredibly risky because of the money involved.

Yep the current iteration is. But should we cross the threshold to full AGI… that’s either gonna be awesome or world ending. Not sure which.

Based on what I’ve witnessed so far, people will play with their AGI units for a bit and then put them down to continue scrolling memes.

Which means it is neither awesome, nor world-ending, but just boring/business as usual.

Yup.

I don’t know why. The people marketing it have absolutely no understanding of what they’re selling.

Best part is that I get paid if it works as they expect it to and I get paid if I have to decommission or replace it. I’m not the one developing the AI that they’re wasting money on, they just demanded I use it.

That’s true software engineering folks. Decoupling doesn’t just make it easier to program and reuse, it saves your job when you need to retire something later too.

What happened to Linus? He looks so old now…

Wow, yeah that’s a big difference from how I remember him

He got old.

That’s an excessive amount of aging is what folks are seeing. Not that he’s just old.

He’s lost a lot of weight in 4 years so that’s probably exacerbating the wtf.

If you find out what happened, let me know, because I think it’s happening to me too.

I admit I understand nothing about ai and haven’t used it in any way nor do I plan to. It feels wrong for me and I believe it might fuck us harder than social media ever could.

But the pictures it creates, the stories and conversations don’t seem like hot air. And I guess, compared to the internet we are at the stage where the modem is still singing the songs of its people. There is more to come.

I heard it can code at a level where entry positions might be in danger to be swapped for ai. It detects cancer visually, recognizes people by the way they walk in China. Also I fear that vulnerable persons might fall for those conversation bots in a world where there is less and less personal contact.

Gotta admit I’m a little afraid it will make most of us useless in the future.

It makes somewhat passable mediocrity, very quickly when directly used for such things. The stories it writes from the simplest of prompts is always shallow and full of cliche (and over-represented words like “delve”). To get it to write good prose basically requires breaking down writing, the activity, into its stream of constituent, tiny tasks and then treating the model like the machine it is. And this hack generalizes out to other tasks, too, including writing code. It isn’t alive. It isn’t even thinking. But if you treat these things as rigid robots getting specific work done, you can make then do real things. The problem is asking experts to do all of that labor to hyper segment the work and micromanage the robot. Doing that is actually more work than just asking the expert to do the task themselves. It is still a very rough tool. It will definitely not replace the intern, just yet. At least my interns submit code changes that compile.

Don’t worry, human toil isn’t going anywhere. All of this stuff is super new and still comparatively useless. Right now, the early adopters are mostly remixing what has worked reliably. We have yet to see truly novel applications yet. What you will see in the near future will be lots of “enhanced” products that you can talk to. Whether you want to or not. The human jobs lost to the first wave of AI automation will likely be in the call center. The important industries such as agriculture are already so hyper automated, it will take an enormous investment to close the 2% left. Many, many industries will be that way, even after AI. And for a slightly more cynical take: Human labor will never go away because having power over machines isn’t the same as having power over other humans. We won’t let computers make us all useless.

And then people will complain about that saying it’s almost all hype and no substance.

Then that one tech bro will keep insisting that lemmy is being unfair to AI and there are so many good use cases.

No one is denying the 10% use cases, we just don’t think it’s special or needs extra attention since those use cases already had other possible algorithmic solutions.

Tech bros need to realize, even if there are some use cases for AI, there has not been any revolution, stop trying to make it happen and enjoy your new slightly better tool in silence.

Hi! It’s me, the guy you discussed this with the other day! The guy that said Lemmy is full of AI wet blankets.

I am 100% with Linus AND would say the 10% good use cases can be transformative.

Since there isn’t any room for nuance on the Internet, my comment seemed to ruffle feathers. There are definitely some folks out there that act like ALL AI is worthless and LLMs specifically have no value. I provided a list of use cases that I use pretty frequently where it can add value. (Then folks started picking it apart with strawmen).

I gotta say though this wave of AI tech feels different. It reminds me of the early days of the web/computing in the late 90s early 2000s. Where it’s fun, exciting, and people are doing all sorts of weird,quirky shit with it, and it’s not even close to perfect. It breaks a lot and has limitations but their is something there. There is a lot of promise.

Like I said else where, it ain’t replacing humans any time soon, we won’t have AGI for decades, and it’s not solving world hunger. That’s all hype bro bullshit. But there is actual value here.

Hi! It’s me, the guy you discussed this with the other day! The guy that said Lemmy is full of AI wet blankets.

Omg you found me in another post. I’m not even mad; I do like how passionate you are about things.

Since there isn’t any room for nuance on the Internet, my comment seemed to ruffle feathers. There are definitely some folks out there that act like ALL AI is worthless and LLMs specifically have no value. I provided a list of use cases that I use pretty frequently where it can add value. (Then folks started picking it apart with strawmen).

What you’re talking about is polarization and yeah, it’s a big issue.

This is a good example, I never did any strawman nor disagree with the fact that it can be useful in some shape or form. I was trying to say its value is much much lower than what people claim to be.

But that’s the issue with polarization, me saying there is much less value can be interpreted as absolute zero, and I apologize for contributing to the polarization.

No AI is a very real thing… just not LLMs, those are pure marketing

Sounds about right. There are some valid and good use cases for “AI”, but the majority is just buzzword marketing.

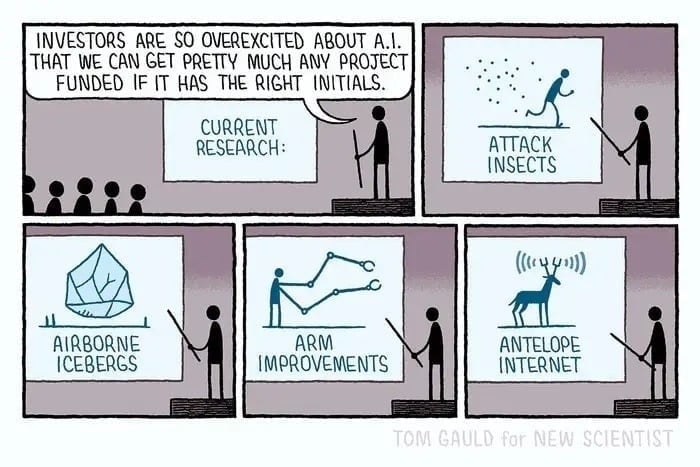

I have lots of uses for Attack Insects….

im down for arm improvements

I think when the hype dies down in a few years, we’ll settle into a couple of useful applications for ML/AI, and a lot will be just thrown out.

I have no idea what will be kept and what will be tossed but I’m betting there will be more tossed than kept.

I recently saw a video of AI designing an engine, and then simulating all the toolpaths to be able to export the G code for a CNC machine. I don’t know how much of what I saw is smoke and mirrors, but even if that is a stretch goal it is quite significant.

An entire engine? That sounds like a marketing plot. But if you take smaller chunks let’s say the shape of a combustion chamber or the shape of a intake or exhaust manifold. It’s going to take white noise and just start pattern matching and monkeys on typewriter style start churning out horrible pieces through a simulator until it finds something that tests out as a viable component. It has a pretty good chance of turning out individual pieces that are either cheaper or more efficient than what we’ve dreamed up.

Just chiming in as another guy who works in AI who agrees with this assessment.

But it’s a little bit worrisome that we all seem to think we’re in the 10%.

I make DNNs (deep neural networks), the current trend in artificial intelligence modeling, for a living.

Much of my ancillary work consists of deflating/tempering the C-suite’s hype and expectations of what “AI” solutions can solve or completely automate.

DNN algorithms can be powerful tools and muses in scientific endeavors, engineering, creativity and innovation. They aren’t full replacements for the power of the human mind.

I can safely say that many, if not most, of my peers in DNN programming and data science are humble in our approach to developing these systems for deployment.

If anything, studying this field has given me an even more profound respect for the billions of years of evolution required to display the power and subtleties of intelligence as we narrowly understand it in an anthropological, neuro-scientific, and/or historical framework(s).

I dunno about him; but genuinely I’m excited about AI. Blows my mind each passing day ;)

I work at a company big into AI. We build our own models. Our senior management drank the Kool-Aid. We don’t have search on our Intranet any more, just LLM chatbots.

Our TLS certificate expired last week on our main web page. I tried to find the contact details for the team responsible and the thing just hallucinated e-mail addresses.

Needless to say, I’m less excited than you.

I’m waiting for the part that it gets used for things that are not lazy, manipulative and dishonest. Until then, I’m sitting it out like Linus.

AI has been used for these things for decades, they are just in the background and not noticed by laypeople

Though the biggest issue is that when people say “AI” today, they mean specifically LLMs, but the world of AI is so much larger than that